GitHub Contributions Graph: Analyzing PageRank & Proving The 6 Handshakes Theory

Vadim Markovtsev, source{d}

Vadim Markovtsev, source{d}.

Vadim Markovtsev, source{d}

Like @acabunoc, Lead Developer for Open Source Engagement at the Mozilla Foundation who is developing new ways to engage contributors on open source projects.

Like the Dat project who are working openly to develop a peer to peer tool for distributing datasets in science, journalism and government.

Like the Federal Source Code Policy on GitHub released by The White House, who this year solicited community feedback on GitHub.

Like this one opened in the Go programming language repo that used open source data on Google’s BiqQuery to help decide whether to add new functionality to a Go standard library.

Commit datasets:

BigQuery will charge you money.

There are no GitHub user <-> email associations in both datasets.

We need to do identity matching.

mcuadros@gmail.com

maximo@sourced.tech

mcuadros@maximos-macbook.local

mcuadros@maximos-mac-pro.local

root@localhost

At large scale, it is useless to distinguish developers and repositories.

Armin is in the center.

8k nodes, 11k edges.

200k nodes, 300k edges.

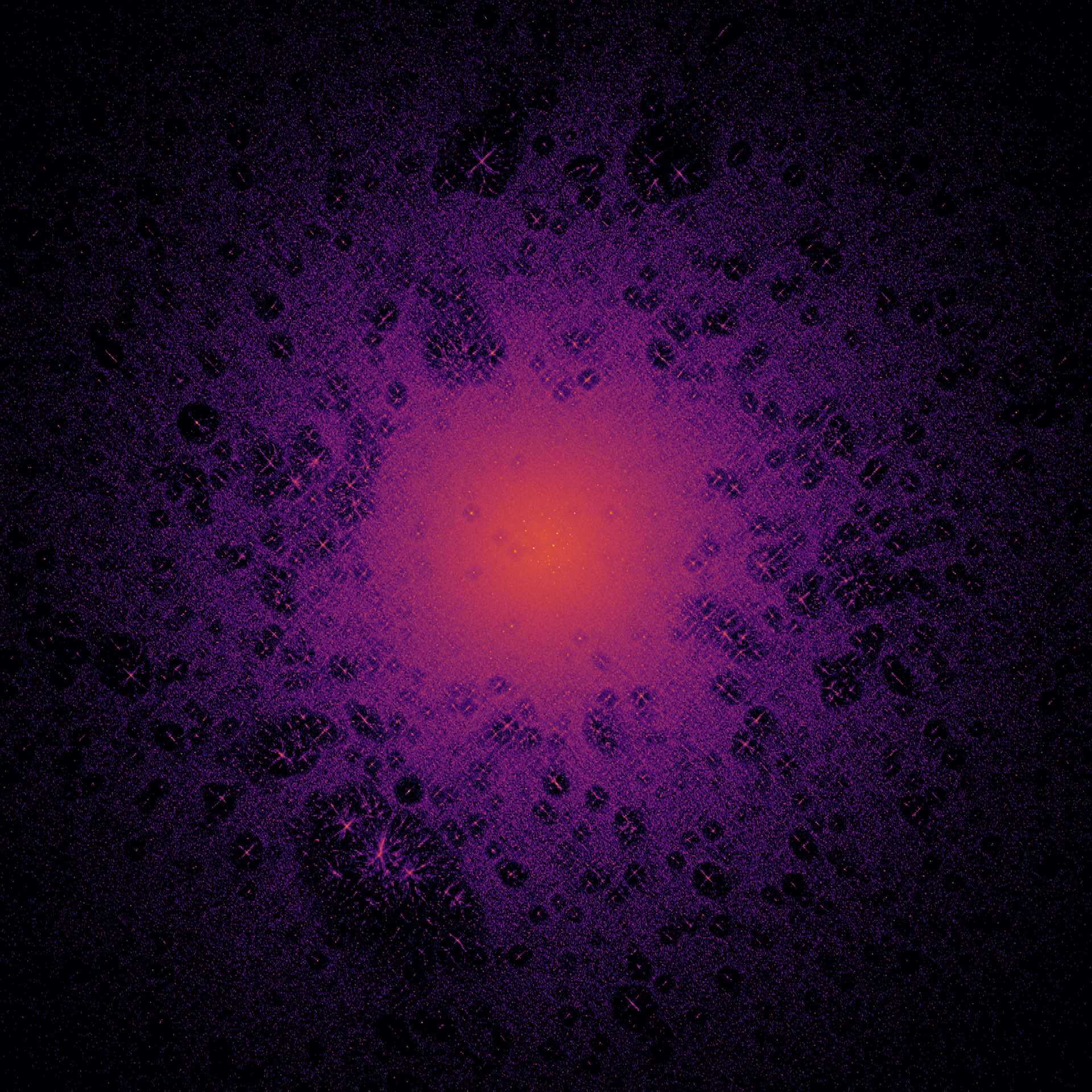

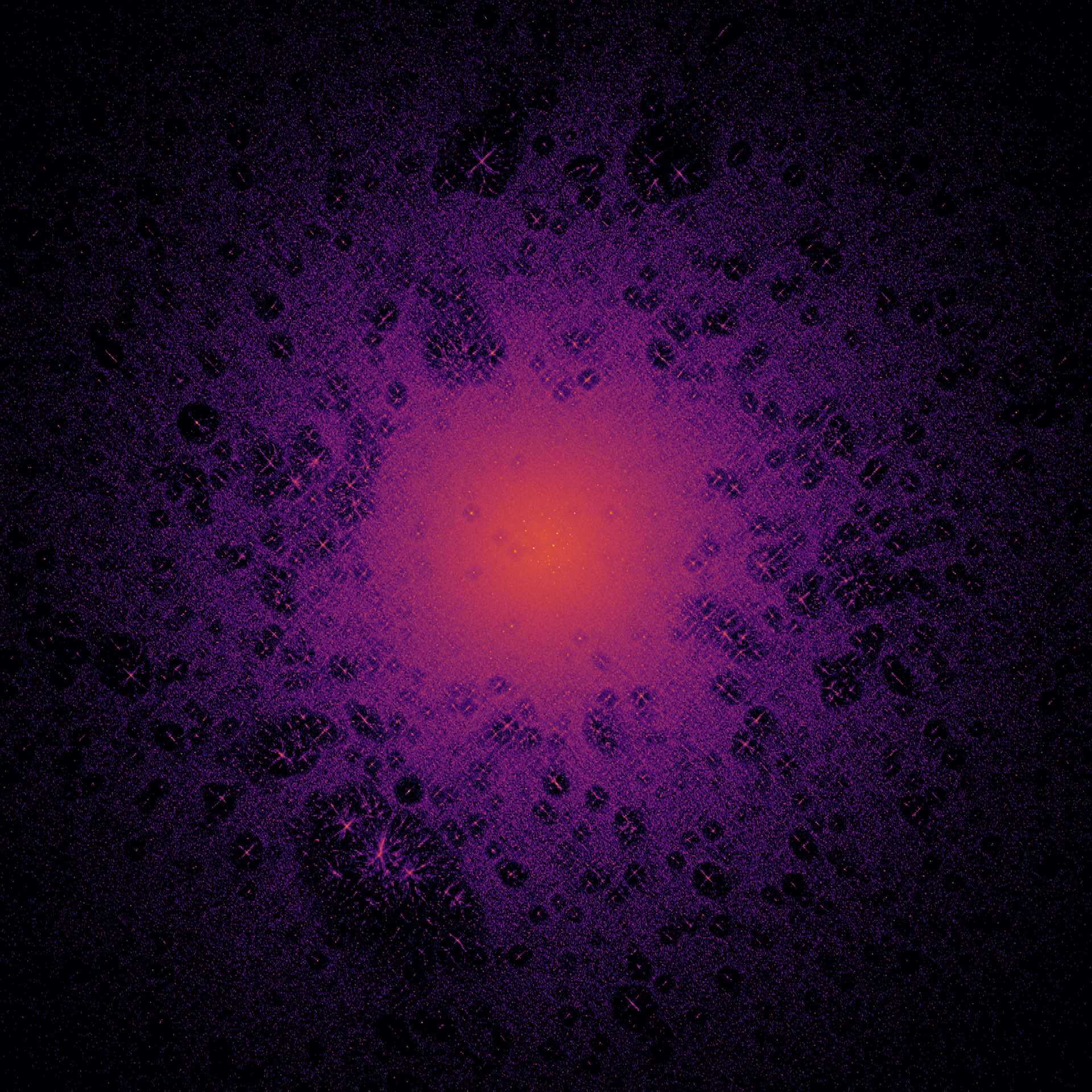

Color depends on how many hops the dot is distant from the root.

Actually Rob Pike did not contribute to cmars/oo and cmars/tools.

Author forgery is perfectly possible on GitHub.

Cij is the number of commits done by developer i to repository j

Cij = Cji

Non-zero elements: <10-7

We normalize values in every column.

$$\sum_i C_{ij}=1$$

The matrix is no longer symmetric.

How to plot 22,000,000 dots?

Given that two developers "know" each other if they contributed to the same project, how far are they from each other on average, if there is a connection?

We run a series of graph traversals

Any type works: DFS, BFS, RFS

Python, 22M nodes - 5 min

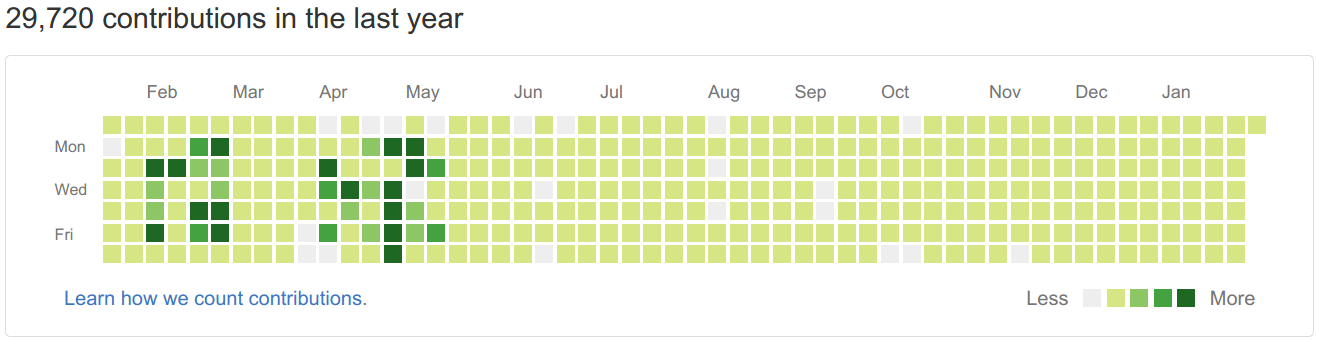

Result: 10200912, 10229, ...

The size is 10200912 / 22000000 < 50% of the whole graph

33% of the developers; this is 11% of the whole GitHub userbase

There are \[\frac{N_{core}(N_{core} + 1)}{2}\] possible distance pairs

That is, 2.3 trillions (1012)

Do we need to evaluate them all? No!

Statistics for the win! Let's define our random variable as the distance between two random developers.

For sufficiently large Nsample, the distribution of \[{\displaystyle {\hat {p_d}}} = \frac{X_d}{N_{sample}}\] will be closely approximated by a normal distribution.

Sample size on Wikipedia.Which algorithm to choose?

We need to avoid the "generational explosion" as much as possible.

What developers and repositories are the most important?

"Importance" is defined recursively: we gain ego if we contribute to repositories to which other important developers contribute.

\[x_ {AR} = \frac{w_ 1 x_ 1 + w_ 2 x_ 2 + w_ 3 x_ 3 + ...}{\lambda} = \frac{1}{\lambda} \sum w_ i x_ i\]

We do not distinguish developers and repositories here.

$$\sum_i C_{ij}=1$$$$C_{ij} \neq C_{ji}$$

If \(C_{ij} > 0\) then \(\vec{x}\) is the first eigenvector of C and \(x_i\) is positive.

This is not our case: only 10-7 elements are positive, the rest are zeros.

Larry and Sergey had exactly the same objective and problems with WWW.

Cij is 1 if web page i links to j and 0 otherwise.

They invented the trick to make C have all elements positive.

They called it PageRank.

$$ G = \beta C + \frac{1-\beta}{N}\left( \begin{array}{cccc} 1 & 1 & ... & 1 \\ 1 & 1 & ... & 1 \\ ... & ... & \ddots & ... \\ 1 & 1 & ... & 1 \end{array} \right) $$

β is the dampening or random walk factor, usually 0.85.

Our matrix becomes acyclic and irreducible, aka the Google matrix.

An efficient algorithm to calculate the first eigenvector.

$$x_{i+1} = G\cdot x_i$$

Sparse friendly.

22M nodes need less than 50 iterations.

GitHub specific: there are no "dead ends".

def page_rank(m, beta=0.85, niter=50):

N = m.shape[0]

x = numpy.ones(N, dtype=numpy.float32) / N

for i in range(niter):

x_next = m.dot(x) * beta + (1 - beta) / N

xdiff = numpy.linalg.norm(x - x_next, ord=1)

x = x_next

return x

Ryan Baumann aka ryanfb (at the time the research was conducted - Jan 2017)

Ryan created more than 1,000 repositories, and each repository gives a small amount of importance.

Link farm failure.

Identity matching is not perfect, so the leaderboard is polluted with such anomalies.

PageRank works to compare specific people.

| Person | PageRank, 10-5 |

|---|---|

| Linus Torvalds | 2.7 |

| Armin Ronacher | 0.8 |

| Maximo Cuadros | 0.26 |

| Vadim Markovtsev | 0.16 |

We are hiring!